Grounded answers,

cited at the sentence.

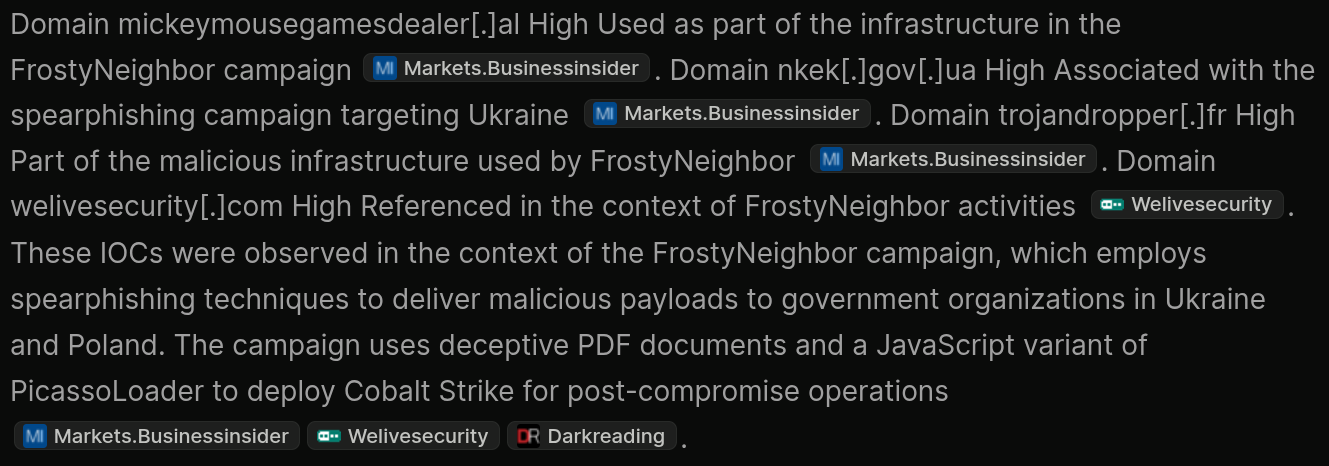

The analyst layer on top of the threat graph. Ask questions about a cluster or across the platform, and every claim in the answer carries an inline citation back to the source it came from. Not the open web.

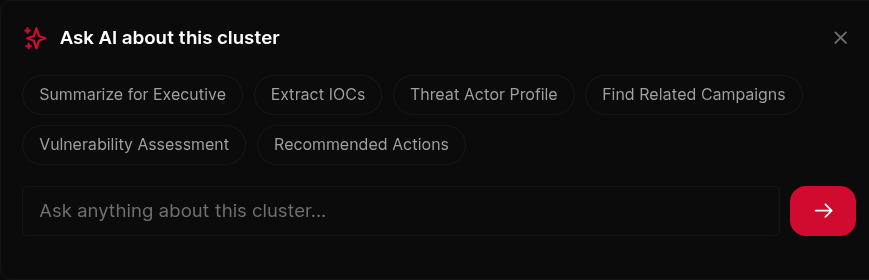

Six pre-built actions per cluster.

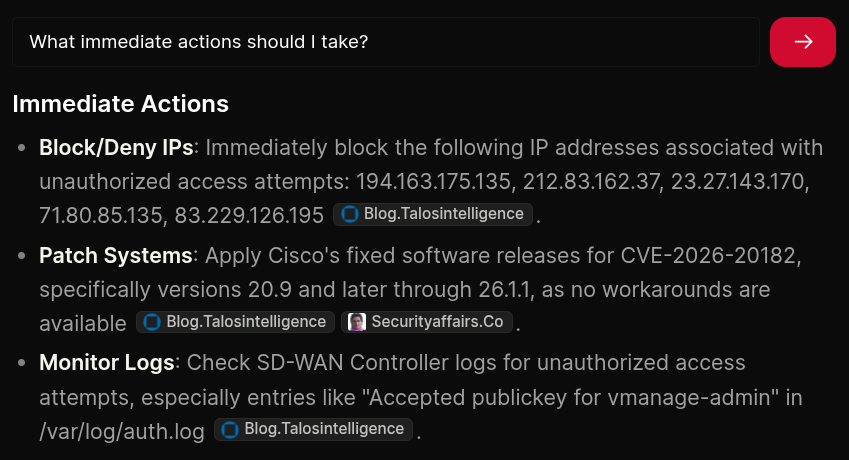

Open any cluster, click Ask AI. Six structured actions sit ready: Executive Summary, Extract IOCs, Threat Actor Profile, Find Related Campaigns, Vulnerability Assessment, and Recommended Actions.

Each action has its own prompt tuned for a specific output format. Extract IOCs returns a defanged markdown table. Recommended Actions returns immediate-then-detection structure. Executive Summary stays under four sentences and avoids jargon.

click to expand

click to expand

Free-form question, same grounding.

Ask anything about the cluster. "Which versions of Windows does this affect?" "What changes does this require to my IdP configuration?" "Which teams in my org would need to be involved to roll this out?"

The model answers from the cluster's own context. When it supplements with general industry knowledge (how a Chrome Enterprise policy works, what a typical OAuth flow looks like), it labels the passage explicitly so you know what to verify with vendor documentation.

click to expand

click to expand

Inline citations on every claim.

Every concrete claim in an answer carries a tag pointing back to its source. [A1] is the primary article. [S1] is a sub-article (a referenced advisory or research blog). [C1] is a CVE enrichment record. [T1] is a threat actor profile. [R1] is a related cluster.

Click any citation to jump to the source. Re-citing the same tag across an answer is expected, so a sentence with five claims and four sources isn't a wall of text, it's a traceable paragraph.

click to expand

click to expand

Grounded, not general.

The model works from the cluster's own context. Article bodies, sub-articles, vendor advisories, CVE enrichment, threat actor profiles, IOCs, and the top related clusters by entity overlap. Not the open web.

Off-topic questions get a polite decline and an explanation of what Ask AI is for. If a claim isn't supported in the cluster's source material, the model won't make it. You get answers you can trust enough to brief a client on.

click to expand

click to expand

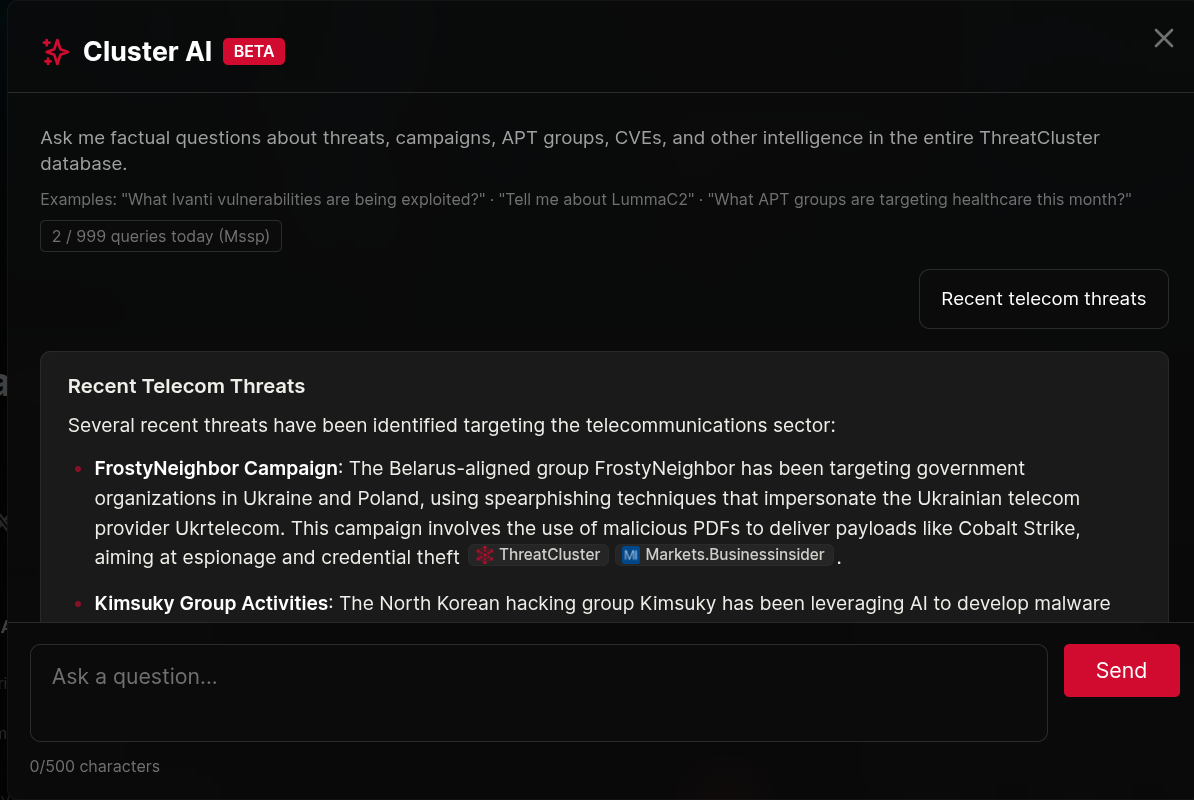

Across the platform too.

The same engine is available from the global search bar for cross-cluster questions. "Which Russian APTs targeted healthcare in the last 30 days?" "What CVEs has CL0p been exploiting this quarter?" Answers draw from the full corpus.

Daily limits scale with subscription tier. Per-cluster Ask AI runs at a tighter limit because the questions are narrower. Global Cluster AI runs at a higher limit because the queries are broader.

click to expand

click to expand

More of the platform

Ask the data.

Trust the answer.

Six structured actions, free-form questions, inline source citations on every claim. Available on Researcher, Business, and MSSP plans.